Anthropic, the AI research company, has reportedly reopened discussions with the US Defense Department in an effort to reach a new agreement regarding the use of its artificial intelligence models. This development comes after previous contract negotiations fell apart amid concerns over surveillance-related language and government designations that threatened the company’s operational status.

Background of the Dispute

Anthropic initially signed a $200 million contract with the US Defense Department in 2025 to supply AI services. However, negotiations broke down when the company raised objections to certain contract language related to surveillance practices. The government sought to include provisions allowing analysis of bulk-acquired data, which Anthropic viewed as conflicting with its ethical safeguards.

Following the stalemate, the Pentagon threatened to label Anthropic a “supply chain risk,” a designation typically reserved for Chinese firms, and even considered canceling its existing contract. This led to a directive by former President Trump for government agencies to cease use of Anthropic’s technology, although a six-month phase-out period permitted limited continued deployment.

Renewed Talks and Key Issues

According to reports, Anthropic CEO Dario Amodei has resumed negotiations with Emil Michael, the Under Secretary of Defense for Research and Engineering. Central to these talks is agreement on contract language that ensures Anthropic’s technology will not be used for mass surveillance. Amodei has indicated that a particular clause about analyzing bulk-acquired data was a primary concern and a sticking point in previous talks.

The Department of Defense has reportedly offered to accept some of Anthropic’s conditions if certain phrases are removed from the contract. This reopening of dialogue signals a possible path forward to resolve disagreements that once placed the company’s government relationship in jeopardy.

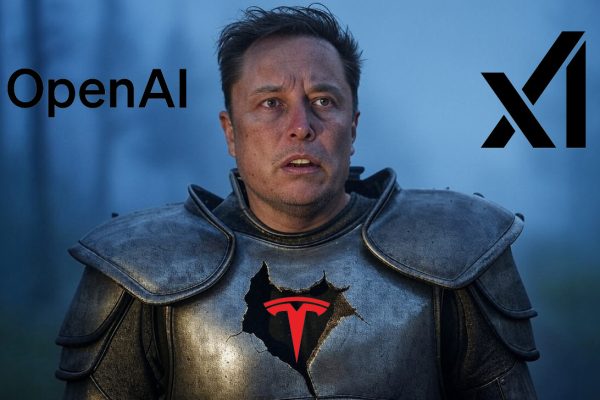

Comparisons with OpenAI’s Defense Department Deal

Anthropic’s disputes contrast with the recent success OpenAI has had in solidifying a Defense Department partnership. OpenAI CEO Sam Altman publicly supported the decision not to designate Anthropic as a supply chain risk and announced that OpenAI agreed to amend its own contract to explicitly prohibit use of its models for mass surveillance of Americans.

OpenAI’s CEO has emphasized that operational decisions during military use of AI technology are controlled by the government, indicating a separation between the company’s role and how its technology is applied in defense operations.

Implications for AI Ethics and Government Use

The Anthropic case highlights ongoing challenges faced by AI companies in balancing ethical commitments with government defense contracts. The hesitation to allow any surveillance use reflects deep concerns about privacy and human rights in the deployment of AI technology.

These negotiations and the conditions attached to Defense Department contracts may influence broader industry standards and government policies concerning the responsible use of AI in national security contexts.

Future Outlook

The outcome of the renewed talks between Anthropic and the US Defense Department remains to be seen. If an agreement is reached, it could restore Anthropic’s access to government projects and set a precedent on surveillance safeguards in AI contracts.

The evolving dynamics between government agencies and AI developers continue to shape the landscape for how advanced AI technology is integrated into sensitive and critical applications.